How to Build an Enterprise Deep Learning Platform, Part Two

May 27, 2020

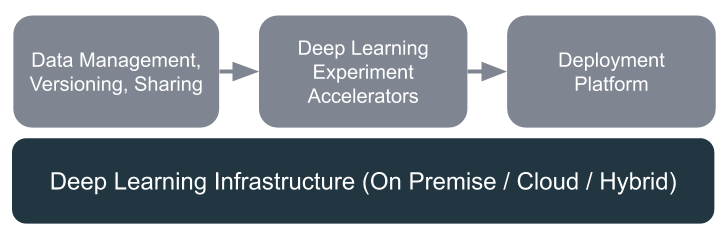

In the first post in this series, we talked about the issues slowing down deep learning teams and why you want to build a powerful machine learning platform to accelerate your AI development. In this post we’ll survey the open source ML software landscape and lay out an end-to-end suite for cranking out new models at scale.

Part three of the series is available now!

Building ML infrastructure

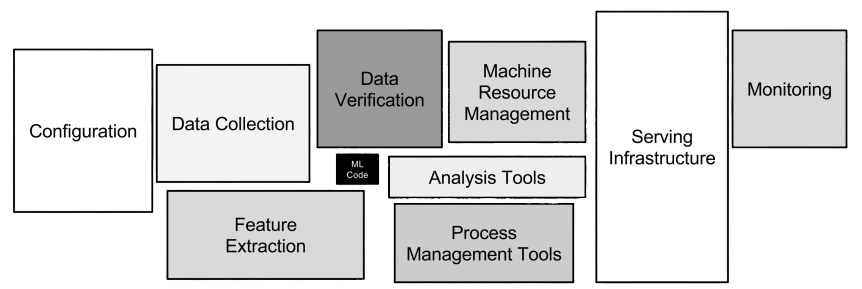

You can build deep learning infrastructure in a lot of ways, but some key components stand out as utterly essential:

Storage: The datasets used to train deep learning models can grow massive and out of control quickly. You’ll want a cost-effective, scalable storage backend to wrangle all that data. We’ve talked to a lot of teams and found most data ops teams have standardized on object storage, like Amazon S3. If you’re on-prem first, a shared file system can also do the job.

Training Infrastructure: You’ll need GPUs to train your models, and probably lots of them to parallelize training.

-

On Prem: If you’re dedicated to saving money and working on-premise, you’ll need to set up a GPU cluster with enough GPUs to meet the demands of your ML team. Managing a GPU cluster, along with the tools for your data scientists need to do their job, is hard — you’ll want a software layer that simplifies using that hardware for your ML team.

-

Cloud: Cloud GPUs can get expensive, but they’re flexible and capable of scaling to whatever size your group needs. Expecting your ML scientists to master cloud infrastructure is a big ask, and you’ll face some serious stumbling blocks. You’ll need a way to abstract the hardware so that ML scientists can focus more on building models and less on infrastructure management.

Deployment Infrastructure: Deployment varies from one ML model to the next, but the most common deployment pattern exposes models as microservices. Your smoothest path to managing a GPU cluster will be Kubernetes, which gained traction because it has the wisdom of a decade of container management baked into it by Google.

Building your Software Layer

Data Management, Versioning, Sharing

The key data problems you’ll really need to solve quickly are sharing, preprocessing pipelines, and versioning. Data changes over time: new data gets collected, labels are improved, the code that transforms and preps that data gets updated at the same time too. If your code and your models and your data are all changing at the same time, how do you keep track of it all easily? You’ll want a strong data versioning platform to keep all those changes in check. Without the right tools to manage this changing data, collaboration becomes next to impossible. The best-in-class open source data versioning and management tools I’ve seen are Pachyderm and DVC.

Pachyderm runs on Kubernetes and delivers a copy-on-write filesystem on top of your object store. It gives you Git-like data versioning and scalable data pipelines. If you’re already leveraging object storage and Kubernetes, it’s really easy to set up. Pachyderm leverages Kubernetes to scale the pipelines you use to transform and prepare data for machine learning. Datasets live in repositories, which version every single change you make to that data and are simple to share with your team.

DVC is significantly more lightweight than Pachyderm, running locally and adding versioning on top of your local storage solution. DVC simply integrates into existing Git repositories to track the version of data that was used to run experiments. ML teams can also define and execute transformation pipelines with DVC; however, the biggest drawback of DVC is that those transformations run locally and are not automatically scaled to a cluster. Notably, DVC does not handle the storage of data, simply the versioning.

Determined: A Deep Learning Experimentation Platform

The process of building deep learning models is slow and laborious, even for an experienced team. It’s especially challenging for a small team trying to do more with less. Deep learning models can take weeks to train, and often need dozens of training runs to find effective hyperparameters. If the ML team is only working with GPUs hosted on a single machine, things are pretty straightforward, but as soon as your team reaches cluster scale your ML team will spend way more time writing code to work with the cluster than building ML models.

Determined is an open source platform built to accelerate deep learning experimentation for small to large machine learning teams. It fills a number of key roles in your AI/ML stack:

- Hardware abstraction that frees model developers from having to write systems glue code

- Tools for accelerating experiments, e.g., easy-to-use distributed training and hyperparameter tuning

- A collaboration platform to allow teams to share their ML research and work together more effectively

- A cost reduction for cloud-first companies by training models on GPU instances that autoscale with demand, even on preemptible instances.

Determined is where your machine learning scientists will build, train, and optimize their deep learning models. By removing the need for your team to write glue code for every new model they build, they’ll be able to spend more time creating models that differentiate you from your competitors.

Determined is open source, and you can install it on bare metal or in the cloud, so it integrates well with most existing infrastructure.

Deployment Platform

Without the right tools, most ML teams spend a lot of time creating and hosting REST endpoints, which contributes to deployment taking months instead of days. There are great tools out there to make this process easier for your data scientists, such as Seldon Core and offerings from cloud providers.

Seldon Core is an open source project that significantly simplifies the process of deploying a model to an existing Kubernetes cluster. If your team is already comfortable with Kubernetes, Seldon makes deployment much easier. If not, Seldon offers an enterprise product that will help, or you may want to build an interface on top of Seldon to help bridge the gap between ML developers and deployment.

AWS, GCP, and Azure all have deployment tools that will help users deploy models on their cloud infrastructure. These tools largely abstract the infrastructure from the user, but require a thorough understanding of their respective cloud platforms. If you’re already locked into a cloud provider, these tools are almost certainly the fastest way to enable deployment but if you ever want to leave that platform you’re locked in tight.

Even worse, the cloud platforms won’t always support the frameworks you need because cutting edge frameworks don’t get the same support as well known frameworks. If you’re using PyTorch and TensorFlow, you’re in good shape, but as soon as you need something that just came fresh out of a research lab you may have to wait for cloud platforms to catch up. Better to have an agnostic and flexible open source framework that lets you bring whatever tools you want to the job.

Wrapping Up

By pulling together a suite of open source tools, you’ll build an end-to-end platform much faster, enable more effective collaboration among team members, and ultimately deliver production-grade models more quickly.

In the third and final post in this series, we’ll give a concrete example of an end-to-end ML platform in action. We’ll weave all these tools together so that your team can seamlessly manage their data, models, and deployments all from within their development environment. Lastly, we’ll show you what it all looks like, by demonstrating an end-to-end example with a Jupyter Notebook you can try on your own.